conversation

design

the discipline of creating interactions between humans and machines that feel natural, helpful, and—most importantly—human.

conversation

design

the discipline of creating interactions between humans and machines that feel natural, helpful, and—most importantly—human.

conversation

design

the discipline of creating interactions between humans and machines that feel natural, helpful, and—most importantly—human.

conversation

design

the discipline of creating interactions between humans and machines that feel natural, helpful, and—most importantly—human.

conversation

design

the discipline of creating interactions between humans and machines that feel natural, helpful, and—most importantly—human.

conversation

design

the discipline of creating interactions between humans and machines that feel natural, helpful, and—most importantly—human.

Dada

Dada

Buttons

Sliders

invisible

Buttons

Sliders

invisible

To understand this shift, we need to look back at how we connect

To understand this shift, we need to look back at how we connect

To understand this shift, we need to look back at how we connect

fixed

context

For thousands of years, the "interface" was static; the human brain did 100% of the processing while the media stayed put.

Oral tradition

~50,000 BCE

The primal baseline. Before screens, knowledge lived in the rhythm of the spoken word, relying on memory and real-time social feedback to survive.

oral tradition

Written language

~3200 BCE

By moving from sound to symbols, we learned to decouple information from the presence of a speaker, allowing ideas to travel across time.

written language

Printing press

1440

This milestone enabled a single voice to reach thousands, standardizing language but making communication static and one-way.

printing press

rigid

logic

We introduced a Logic Gate between two people. In this wave, interaction was governed by a strict "if-this-then-that" decision tree; if you didn't follow the machine's specific path (like waiting for a beep or pressing a number), the conversation simply died.

Telephone

1876

The return of synchronous voice through a wire. It introduced strict mechanical protocols and "logic gates" to digital exchange.

telephone

1876

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

intent

mapping

The "Chatbot Boom" moved us from following lines to guessing needs. Instead of a rigid script, designers built "intent buckets," teaching the machine to calculate the probability of what a user wanted from a fragment of text. We stopped drawing flowcharts and started training models to recognize patterns.

1876

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

SMS & chat

1992

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

texting / sms

Virtual assistant

2011

The peak of the intent era. Siri, Alexa, Google Assistant, Cortana, and Bixby taught us to talk to our devices using massive libraries of pre-defined "Intents" to process our speech.

virtual assistants

agency

AI

In 2026, we’ve moved past "guessing" and into real-time reasoning and autonomy. We no longer write responses; we orchestrate AI Agents that use tools, maintain long-term memory, and take independent action to achieve a user's goal. We have finally designed a machine that can join the "Invisible Dance" of natural human talk.

Generative AI

2022

Now, the machine is a reasoning participant that synthesizes and responds in real-time. We’ve moved past static scripts to design the "invisible dance" between human context and machine intelligence.

generative

Agentic AI

2024

The leap from talking to doing. These systems don't just process words; they use tools to execute multi-step tasks autonomously.

AI agents

fixed

context

For thousands of years, the "interface" was static; the human brain did 100% of the processing while the media stayed put.

Oral tradition

~50,000 BCE

The primal baseline. Before screens, knowledge lived in the rhythm of the spoken word, relying on memory and real-time social feedback to survive.

oral tradition

Written language

~3200 BCE

By moving from sound to symbols, we learned to decouple information from the presence of a speaker, allowing ideas to travel across time.

written language

Printing press

1440

This milestone enabled a single voice to reach thousands, standardizing language but making communication static and one-way.

printing press

rigid

logic

We introduced a Logic Gate between two people. In this wave, interaction was governed by a strict "if-this-then-that" decision tree; if you didn't follow the machine's specific path (like waiting for a beep or pressing a number), the conversation simply died.

Telephone

1876

The return of synchronous voice through a wire. It introduced strict mechanical protocols and "logic gates" to digital exchange.

telephone

1876

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

intent

mapping

The "Chatbot Boom" moved us from following lines to guessing needs. Instead of a rigid script, designers built "intent buckets," teaching the machine to calculate the probability of what a user wanted from a fragment of text. We stopped drawing flowcharts and started training models to recognize patterns.

1876

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

SMS & chat

1992

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

texting / sms

Virtual assistant

2011

The peak of the intent era. Siri, Alexa, Google Assistant, Cortana, and Bixby taught us to talk to our devices using massive libraries of pre-defined "Intents" to process our speech.

virtual assistants

agency

AI

In 2026, we’ve moved past "guessing" and into real-time reasoning and autonomy. We no longer write responses; we orchestrate AI Agents that use tools, maintain long-term memory, and take independent action to achieve a user's goal. We have finally designed a machine that can join the "Invisible Dance" of natural human talk.

Generative AI

2022

Now, the machine is a reasoning participant that synthesizes and responds in real-time. We’ve moved past static scripts to design the "invisible dance" between human context and machine intelligence.

generative

Agentic AI

2024

The leap from talking to doing. These systems don't just process words; they use tools to execute multi-step tasks autonomously.

AI agents

fixed

context

For thousands of years, the "interface" was static; the human brain did 100% of the processing while the media stayed put.

Oral tradition

~50,000 BCE

The primal baseline. Before screens, knowledge lived in the rhythm of the spoken word, relying on memory and real-time social feedback to survive.

oral tradition

Written language

~3200 BCE

By moving from sound to symbols, we learned to decouple information from the presence of a speaker, allowing ideas to travel across time.

written language

Printing press

1440

This milestone enabled a single voice to reach thousands, standardizing language but making communication static and one-way.

printing press

rigid

logic

We introduced a Logic Gate between two people. In this wave, interaction was governed by a strict "if-this-then-that" decision tree; if you didn't follow the machine's specific path (like waiting for a beep or pressing a number), the conversation simply died.

Telephone

1876

The return of synchronous voice through a wire. It introduced strict mechanical protocols and "logic gates" to digital exchange.

telephone

intent

mapping

The "Chatbot Boom" moved us from following lines to guessing needs. Instead of a rigid script, designers built "intent buckets," teaching the machine to calculate the probability of what a user wanted from a fragment of text. We stopped drawing flowcharts and started training models to recognize patterns.

1876

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

SMS & chat

1992

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

texting / sms

Virtual assistant

2011

The peak of the intent era. Siri, Alexa, Google Assistant, Cortana, and Bixby taught us to talk to our devices using massive libraries of pre-defined "Intents" to process our speech.

virtual assistants

agency

AI

In 2026, we’ve moved past "guessing" and into real-time reasoning and autonomy. We no longer write responses; we orchestrate AI Agents that use tools, maintain long-term memory, and take independent action to achieve a user's goal. We have finally designed a machine that can join the "Invisible Dance" of natural human talk.

Generative AI

2022

Now, the machine is a reasoning participant that synthesizes and responds in real-time. We’ve moved past static scripts to design the "invisible dance" between human context and machine intelligence.

generative

Agentic AI

2024

The leap from talking to doing. These systems don't just process words; they use tools to execute multi-step tasks autonomously.

AI agents

fixed

context

For thousands of years, the "interface" was static; the human brain did 100% of the processing while the media stayed put.

Oral tradition

~50,000 BCE

The primal baseline. Before screens, knowledge lived in the rhythm of the spoken word, relying on memory and real-time social feedback to survive.

oral tradition

Written language

~3200 BCE

By moving from sound to symbols, we learned to decouple information from the presence of a speaker, allowing ideas to travel across time.

written language

Printing press

1440

This milestone enabled a single voice to reach thousands, standardizing language but making communication static and one-way.

printing press

rigid

logic

We introduced a Logic Gate between two people. In this wave, interaction was governed by a strict "if-this-then-that" decision tree; if you didn't follow the machine's specific path (like waiting for a beep or pressing a number), the conversation simply died.

Telephone

1876

The return of synchronous voice through a wire. It introduced strict mechanical protocols and "logic gates" to digital exchange.

telephone

1876

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

intent

mapping

The "Chatbot Boom" moved us from following lines to guessing needs. Instead of a rigid script, designers built "intent buckets," teaching the machine to calculate the probability of what a user wanted from a fragment of text. We stopped drawing flowcharts and started training models to recognize patterns.

1876

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

SMS & chat

1992

The digital evolution of the letter. It introduced asynchronous threading, teaching us to organize conversations into subject lines and archived data.

texting / sms

Virtual assistant

2011

The peak of the intent era. Siri, Alexa, Google Assistant, Cortana, and Bixby taught us to talk to our devices using massive libraries of pre-defined "Intents" to process our speech.

virtual assistants

agency

AI

In 2026, we’ve moved past "guessing" and into real-time reasoning and autonomy. We no longer write responses; we orchestrate AI Agents that use tools, maintain long-term memory, and take independent action to achieve a user's goal. We have finally designed a machine that can join the "Invisible Dance" of natural human talk.

Generative AI

2022

Now, the machine is a reasoning participant that synthesizes and responds in real-time. We’ve moved past static scripts to design the "invisible dance" between human context and machine intelligence.

generative

Agentic AI

2024

The leap from talking to doing. These systems don't just process words; they use tools to execute multi-step tasks autonomously.

AI agents

The new era is about teaching machines to speak 'human.

The new era is about teaching machines to speak 'human.

The new era is about teaching machines to speak 'human.

The new era is about teaching machines to speak 'human.

The new era is about teaching machines to speak 'human.

The new era is about teaching machines to speak 'human.

emerging

trends

emerging

trends

emerging

trends

Agentic Orchestration

We’ve moved beyond single bots to designing swarms—networks of specialized AI agents that collaborate to solve complex, multi-step goals. As designers, we manage how these agents talk to each other and escalate to humans.

Adaptive & Generative UI

The static screen is dying. We are now designing Adaptive UIs that build themselves in real-time based on a user’s history, intent, and current context.

Multimodal Interfaces

Conversation in 2026 isn't just text; it’s a blend of voice, vision, and emotional intelligence. Products can now detect sentiment from your tone or expression (with consent) and react naturally, making the "Zero UI" experience feel truly ambient.

MX (Machine Experience) Design

We are no longer just designing for people; we are designing for the machines (LLMs and search agents) that read and summarize our content.

Books, blogs, accounts that I find helpful for a beginner like me to learn more

resources

and events

resources

and events

resources

and events

Interface That Augment or Replace

ZEH

Stay on topic. The information provided should be relevant to the current exchange

HomePod meets Apple Intelligence

Sahil Afrid Farookhi

Stay on topic. The information provided should be relevant to the current exchange

Crossing the uncanny valley of conversational voice

Sesame team

Stay on topic. The information provided should be relevant to the current exchange

articles and blogs

Interface That Augment or Replace

ZEH

Stay on topic. The information provided should be relevant to the current exchange

HomePod meets Apple Intelligence

Sahil Afrid Farookhi

Stay on topic. The information provided should be relevant to the current exchange

Crossing the uncanny valley of conversational voice

Sesame team

Stay on topic. The information provided should be relevant to the current exchange

articles and blogs

Interface That Augment or Replace

ZEH

Stay on topic. The information provided should be relevant to the current exchange

HomePod meets Apple Intelligence

Sahil Afrid Farookhi

Stay on topic. The information provided should be relevant to the current exchange

Crossing the uncanny valley of conversational voice

Sesame team

Stay on topic. The information provided should be relevant to the current exchange

articles and blogs

Interface That Augment or Replace

ZEH

Stay on topic. The information provided should be relevant to the current exchange

HomePod meets Apple Intelligence

Sahil Afrid Farookhi

Stay on topic. The information provided should be relevant to the current exchange

Crossing the uncanny valley of conversational voice

Sesame team

Stay on topic. The information provided should be relevant to the current exchange

articles and blogs

Interface That Augment or Replace

ZEH

Stay on topic. The information provided should be relevant to the current exchange

HomePod meets Apple Intelligence

Sahil Afrid Farookhi

Stay on topic. The information provided should be relevant to the current exchange

Crossing the uncanny valley of conversational voice

Sesame team

Stay on topic. The information provided should be relevant to the current exchange

articles and blogs

Interface That Augment or Replace

ZEH

Stay on topic. The information provided should be relevant to the current exchange

HomePod meets Apple Intelligence

Sahil Afrid Farookhi

Stay on topic. The information provided should be relevant to the current exchange

Crossing the uncanny valley of conversational voice

Sesame team

Stay on topic. The information provided should be relevant to the current exchange

articles and blogs

1

The Sound of the Future: The Coming Age of Voice Technology

Designing Voice User Interface

Conversations with Things: UX Design for Chat and Voice

books

1

The Sound of the Future: The Coming Age of Voice Technology

Designing Voice User Interface

Conversations with Things: UX Design for Chat and Voice

books

1

The Sound of the Future: The Coming Age of Voice Technology

Designing Voice User Interface

Conversations with Things: UX Design for Chat and Voice

books

1

The Sound of the Future: The Coming Age of Voice Technology

Designing Voice User Interface

Conversations with Things: UX Design for Chat and Voice

books

1

The Sound of the Future: The Coming Age of Voice Technology

Designing Voice User Interface

Conversations with Things: UX Design for Chat and Voice

books

1

The Sound of the Future: The Coming Age of Voice Technology

Designing Voice User Interface

Conversations with Things: UX Design for Chat and Voice

books

1

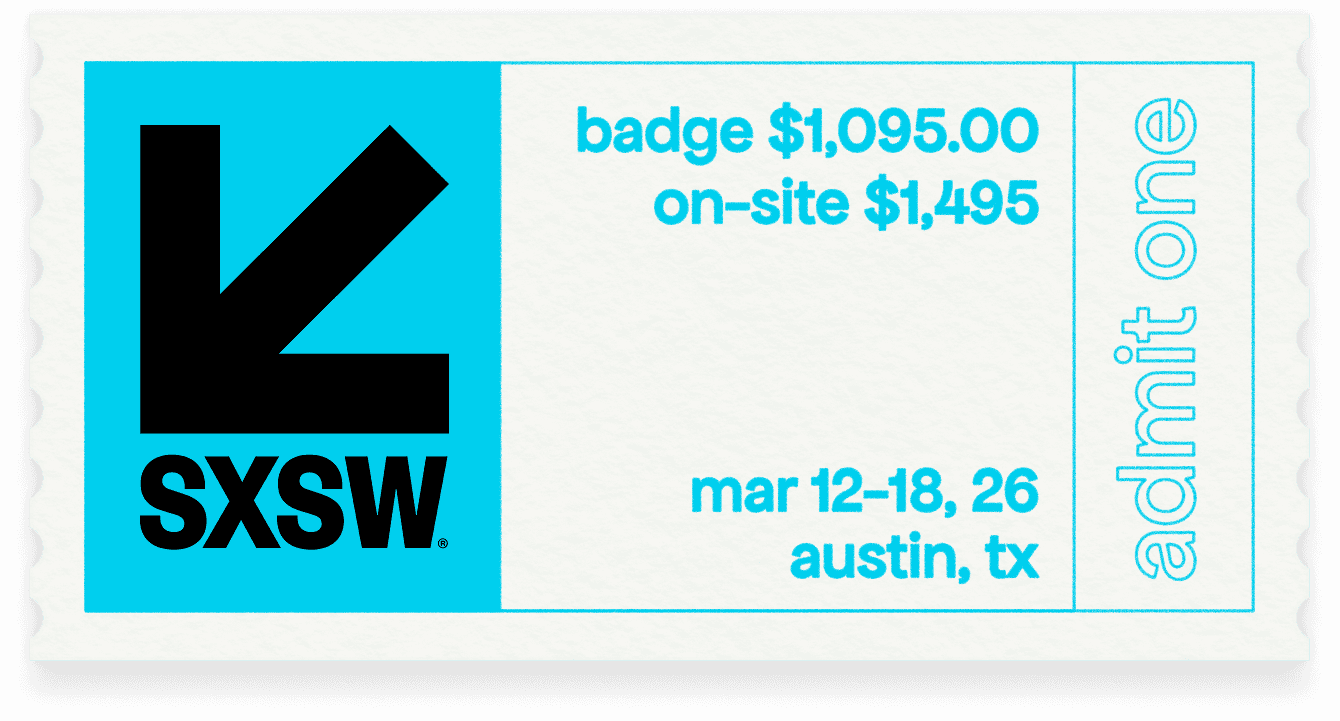

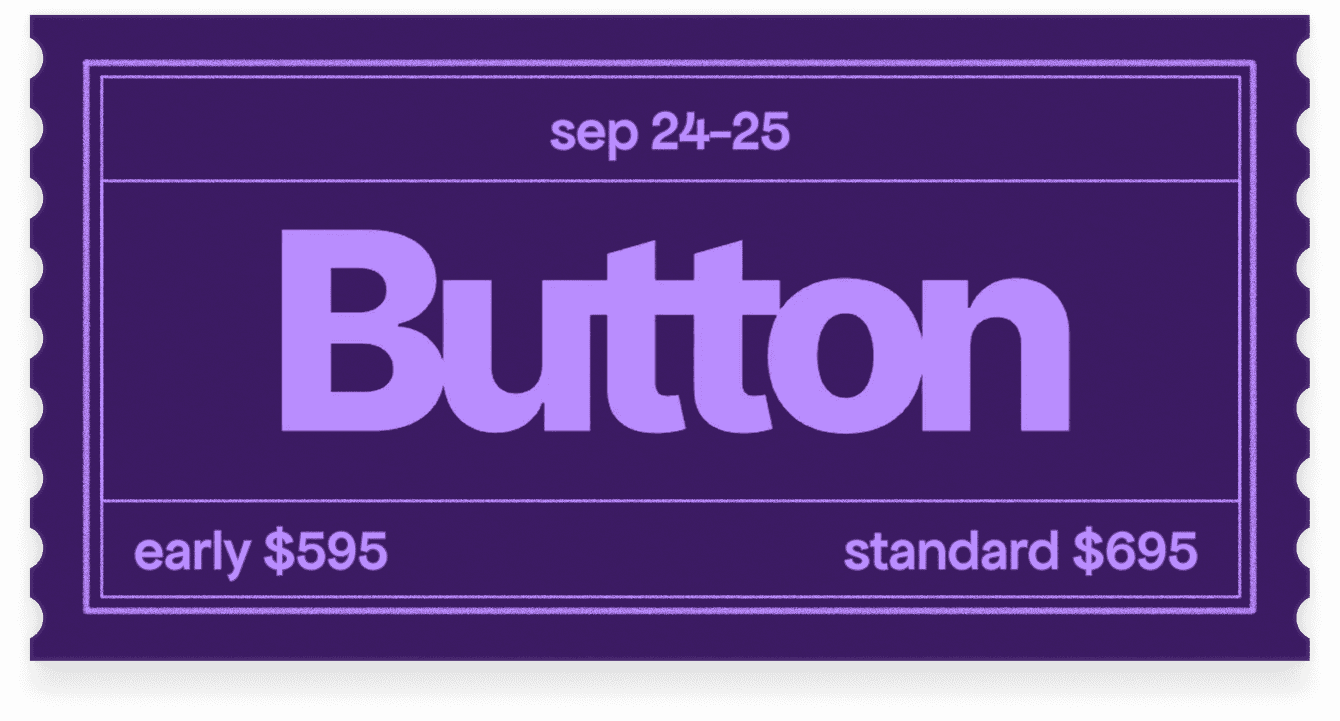

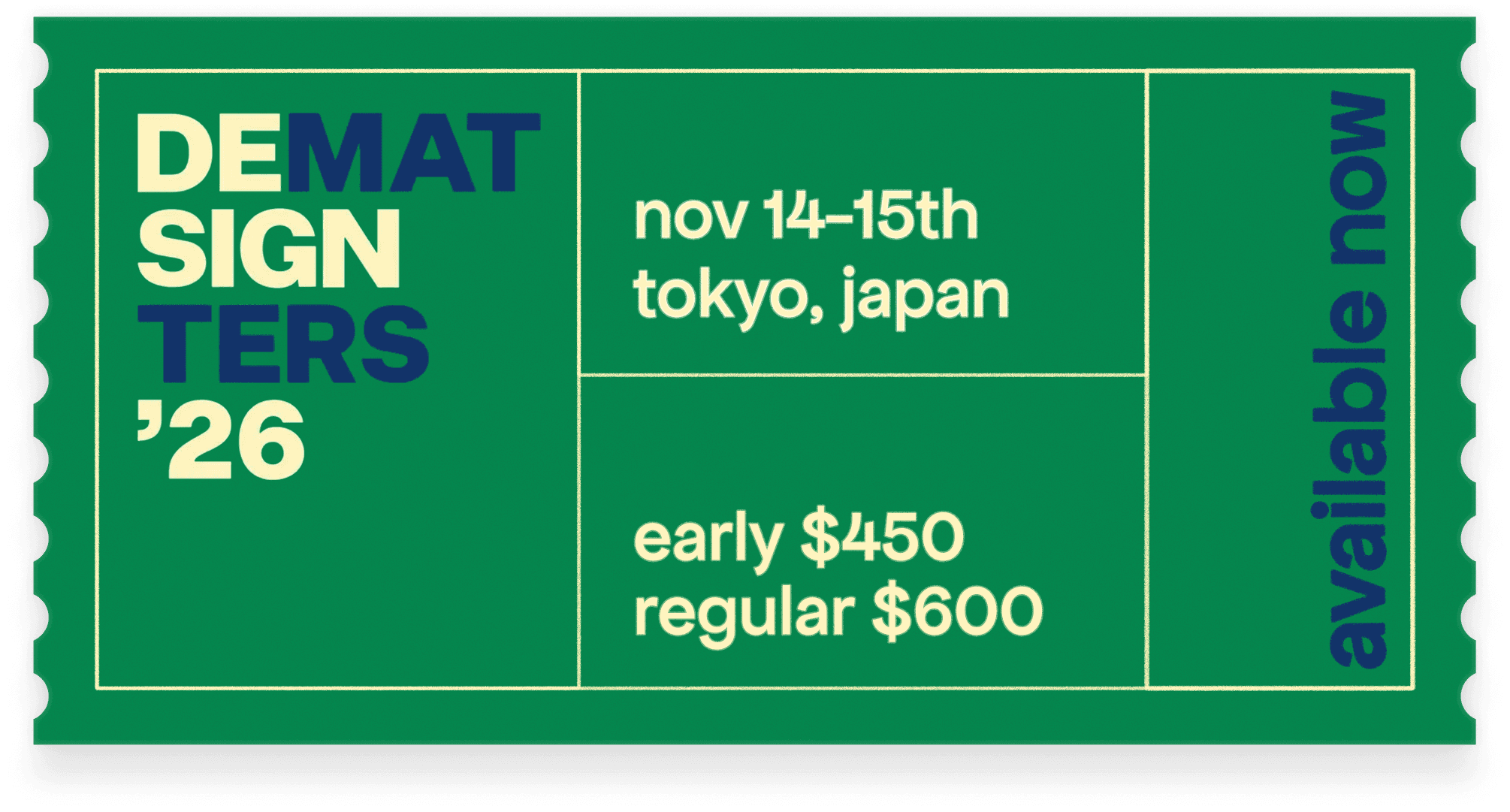

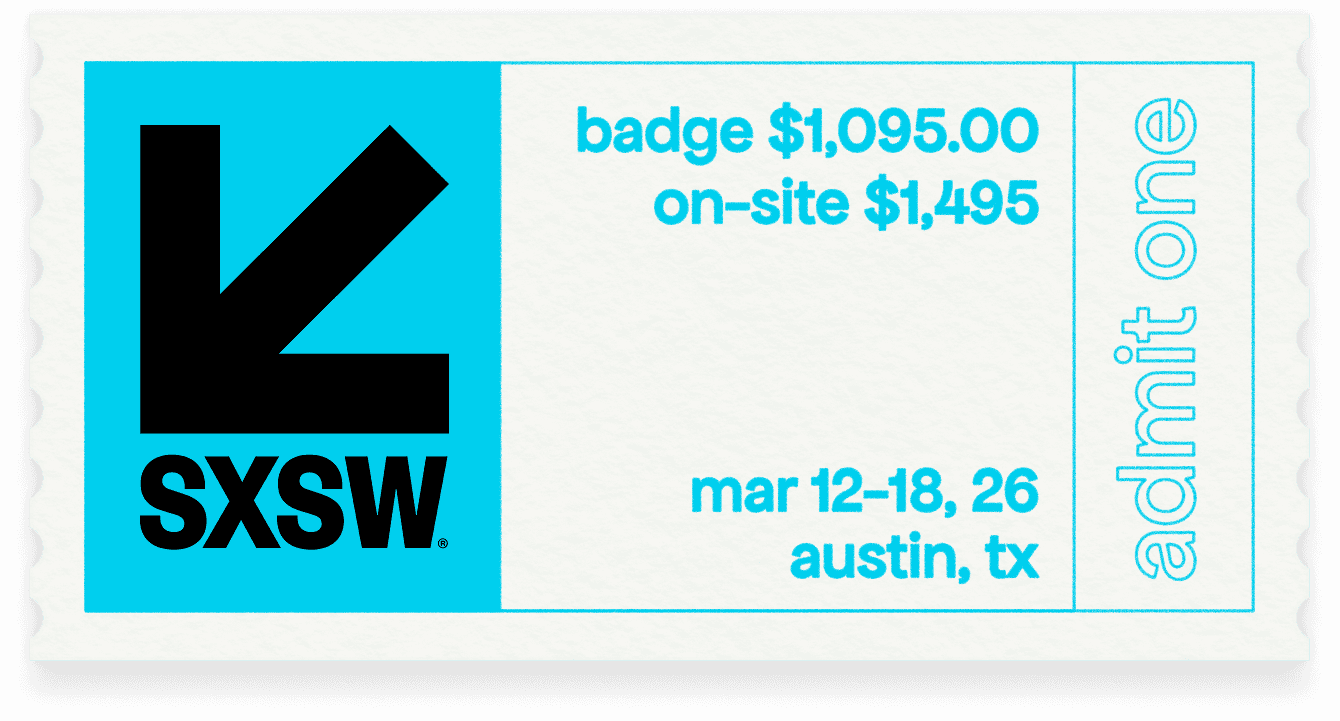

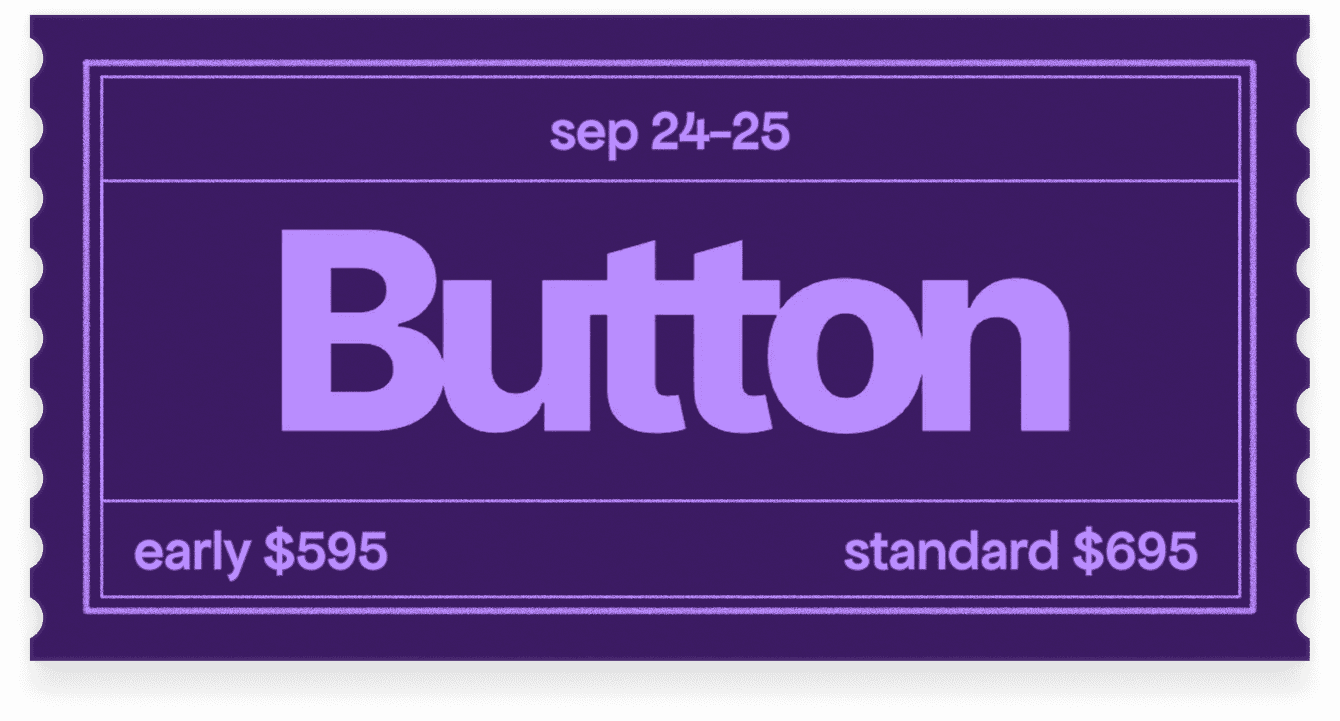

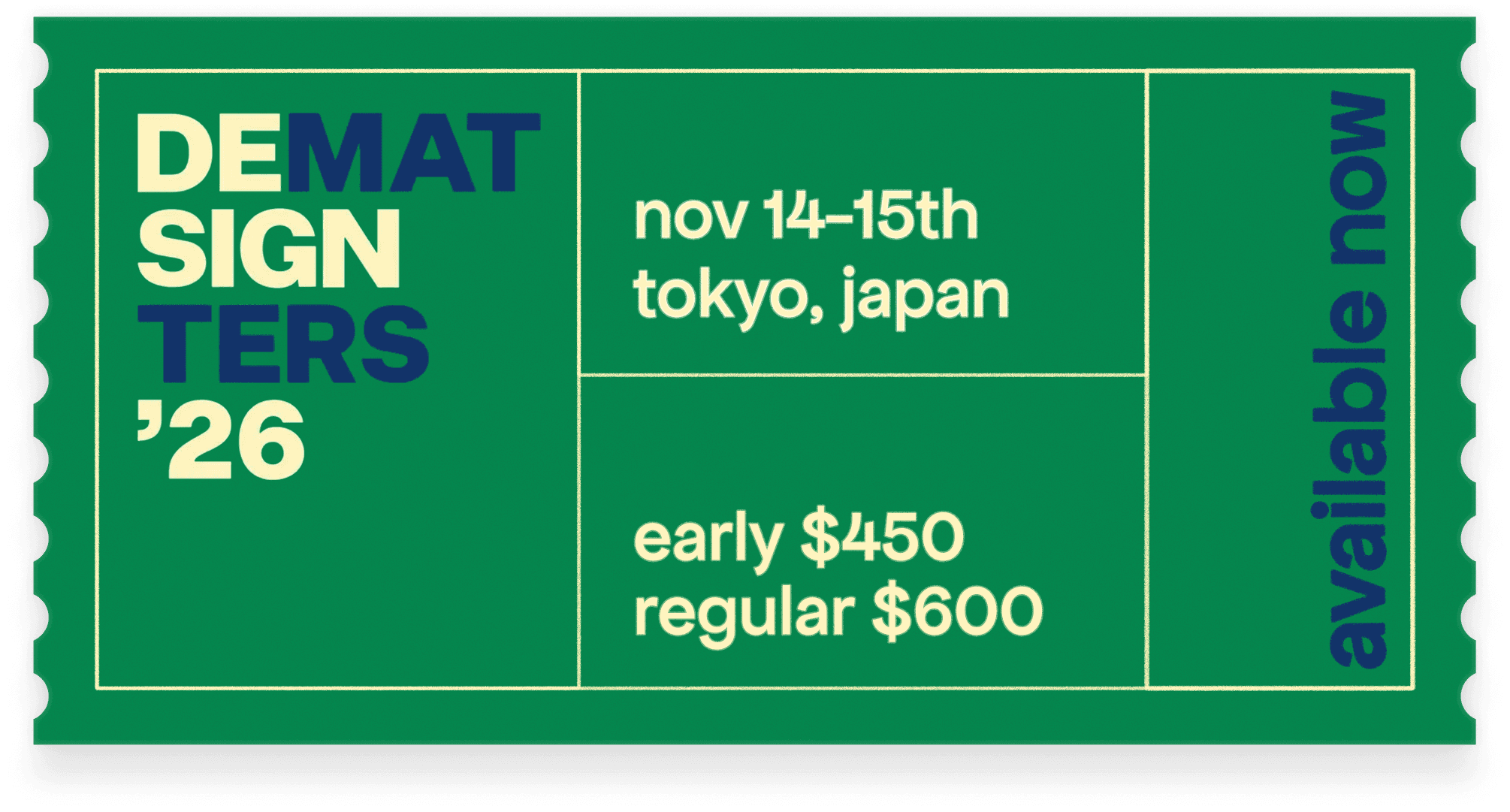

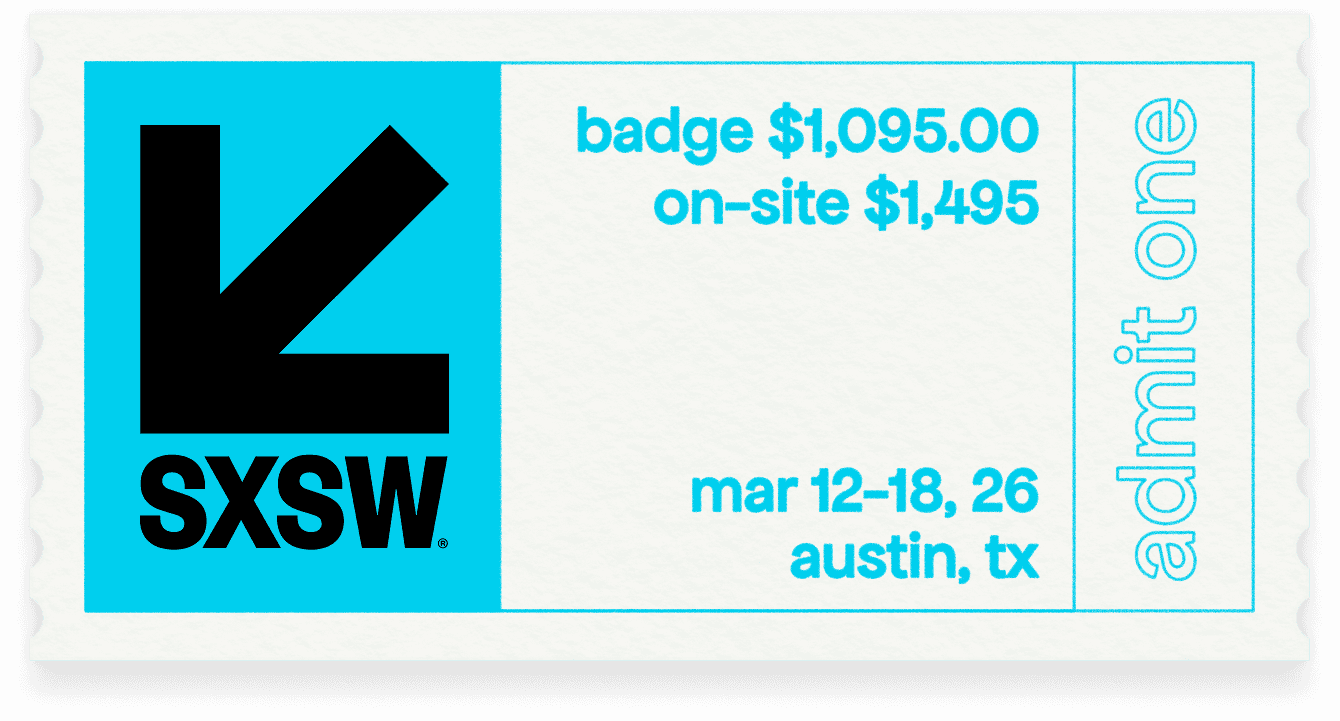

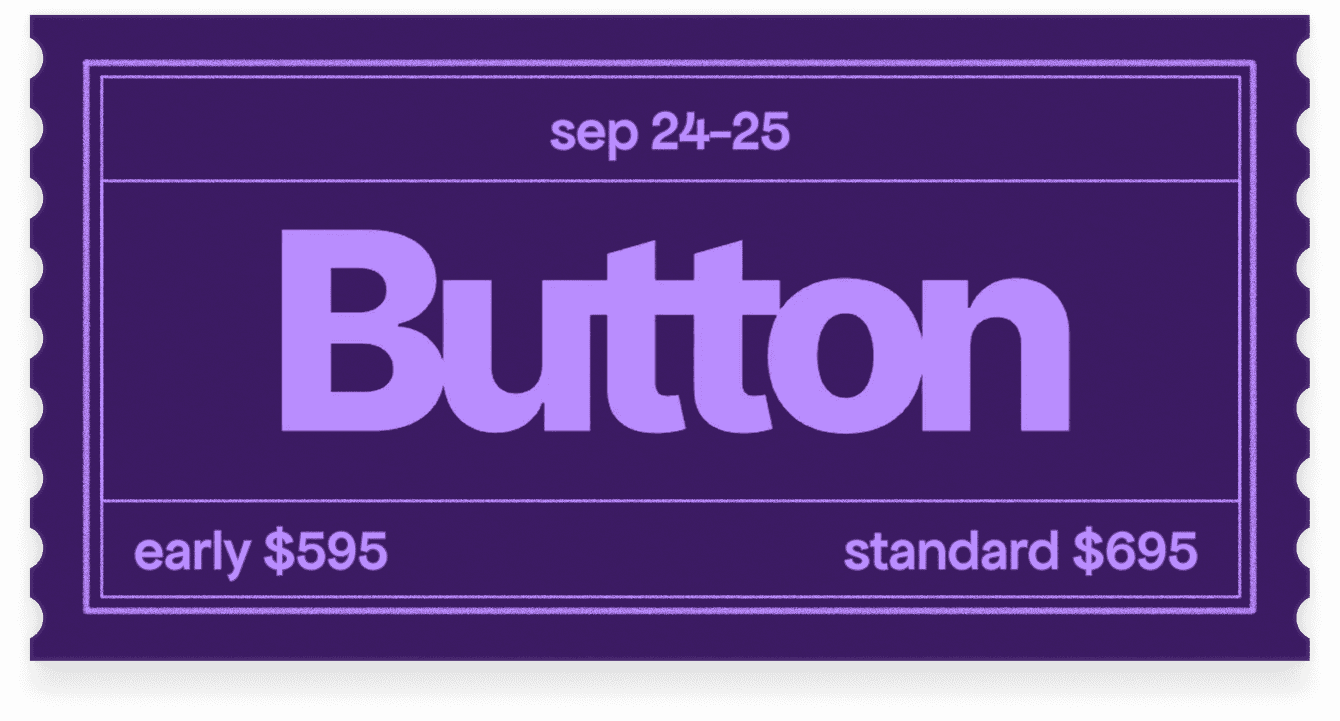

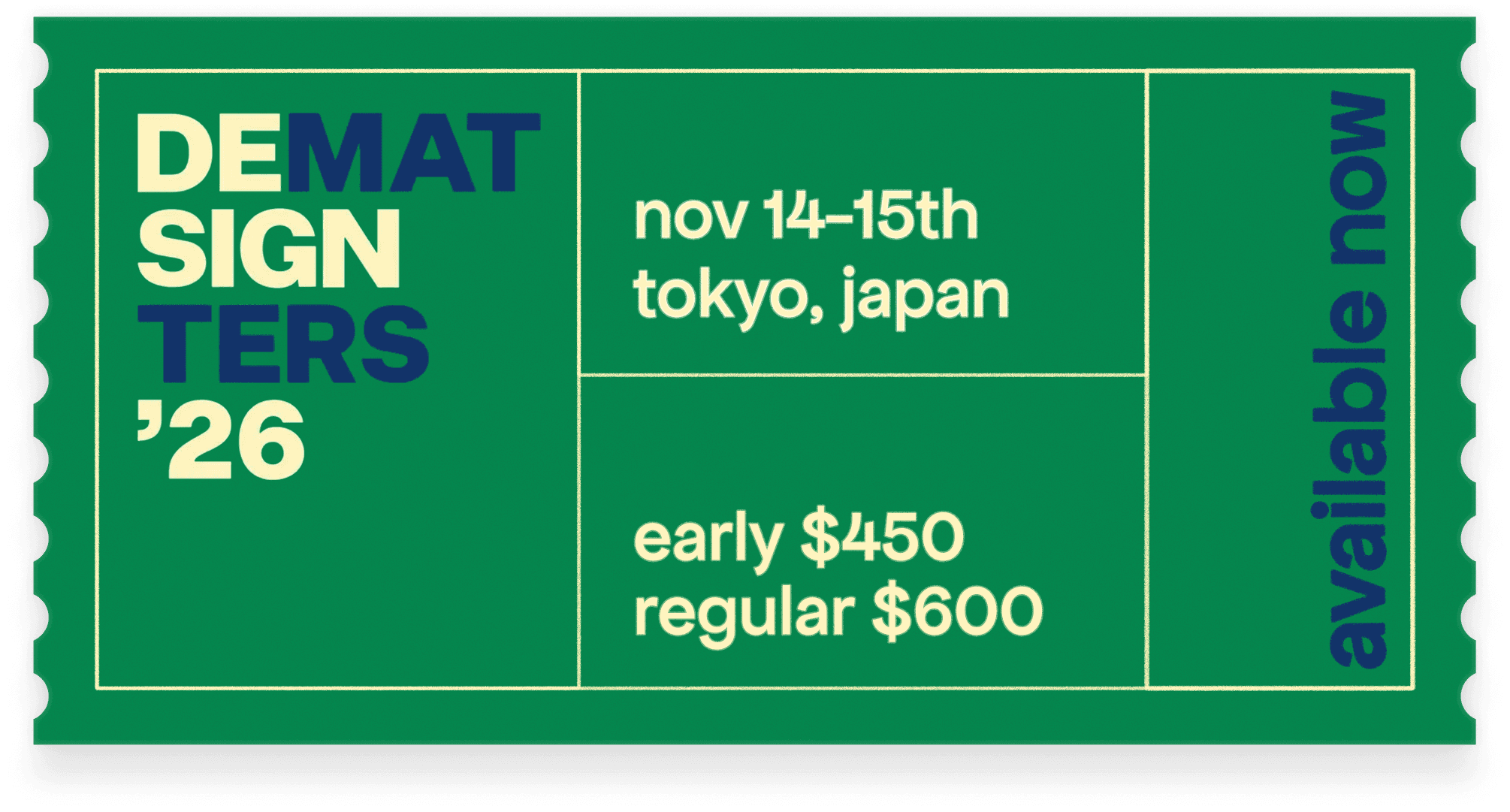

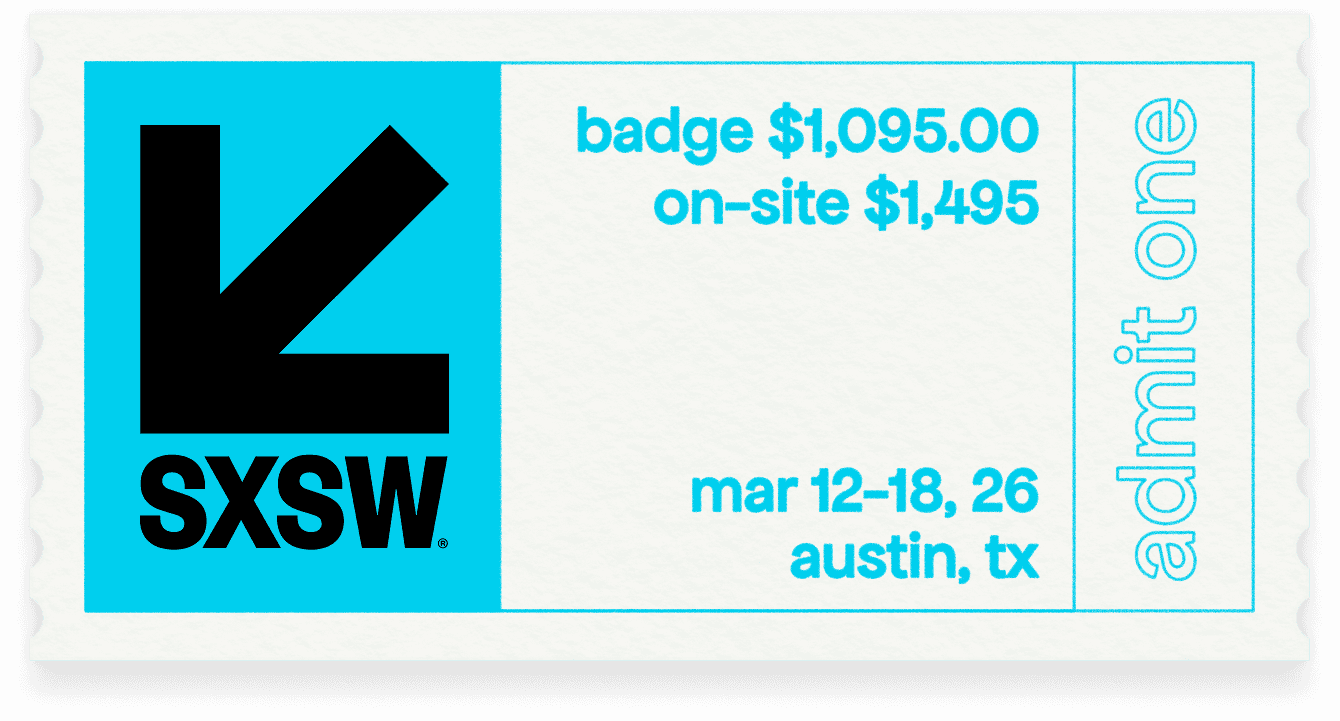

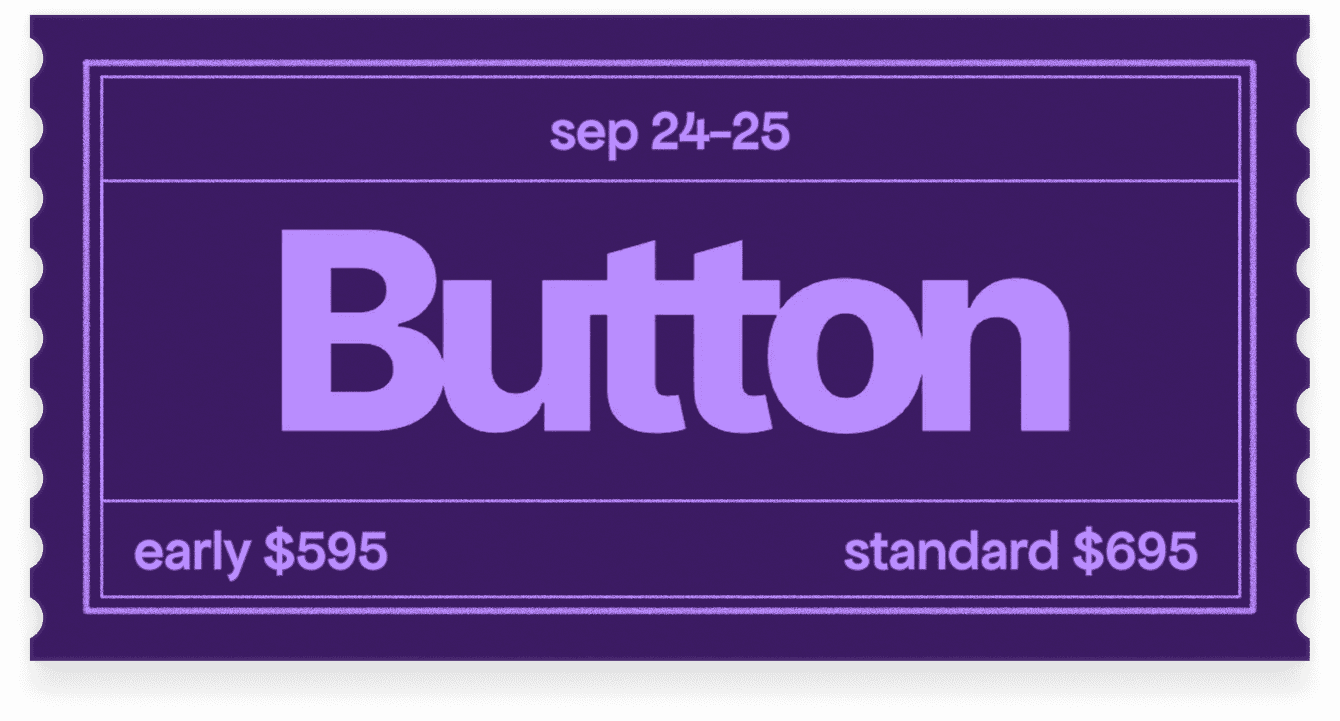

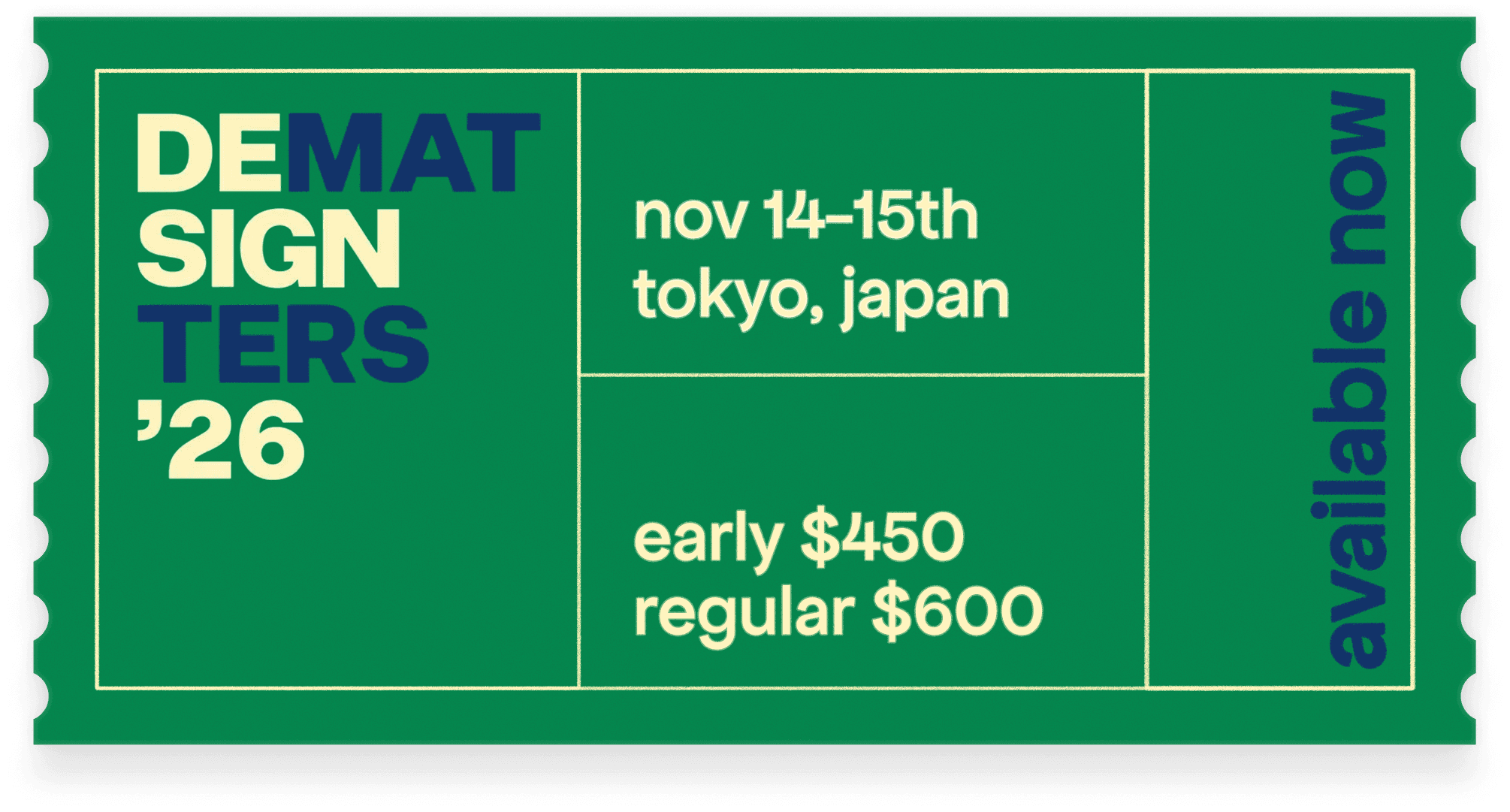

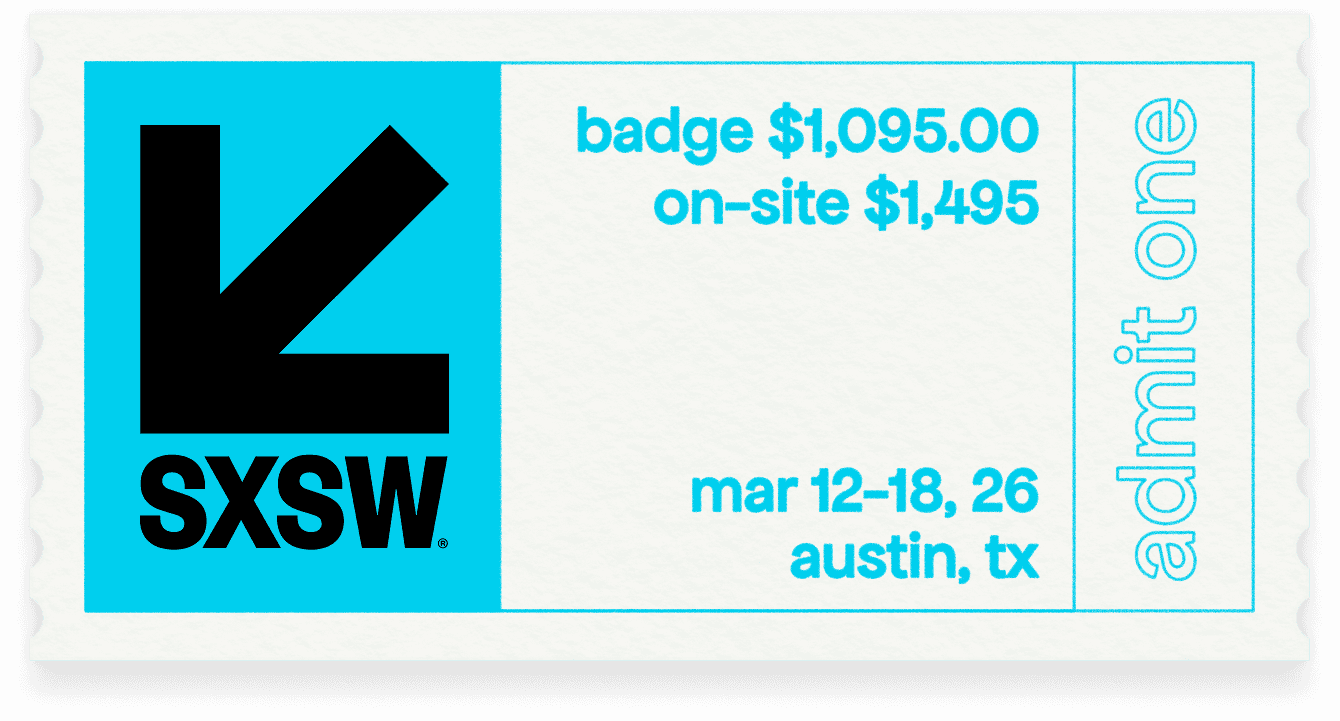

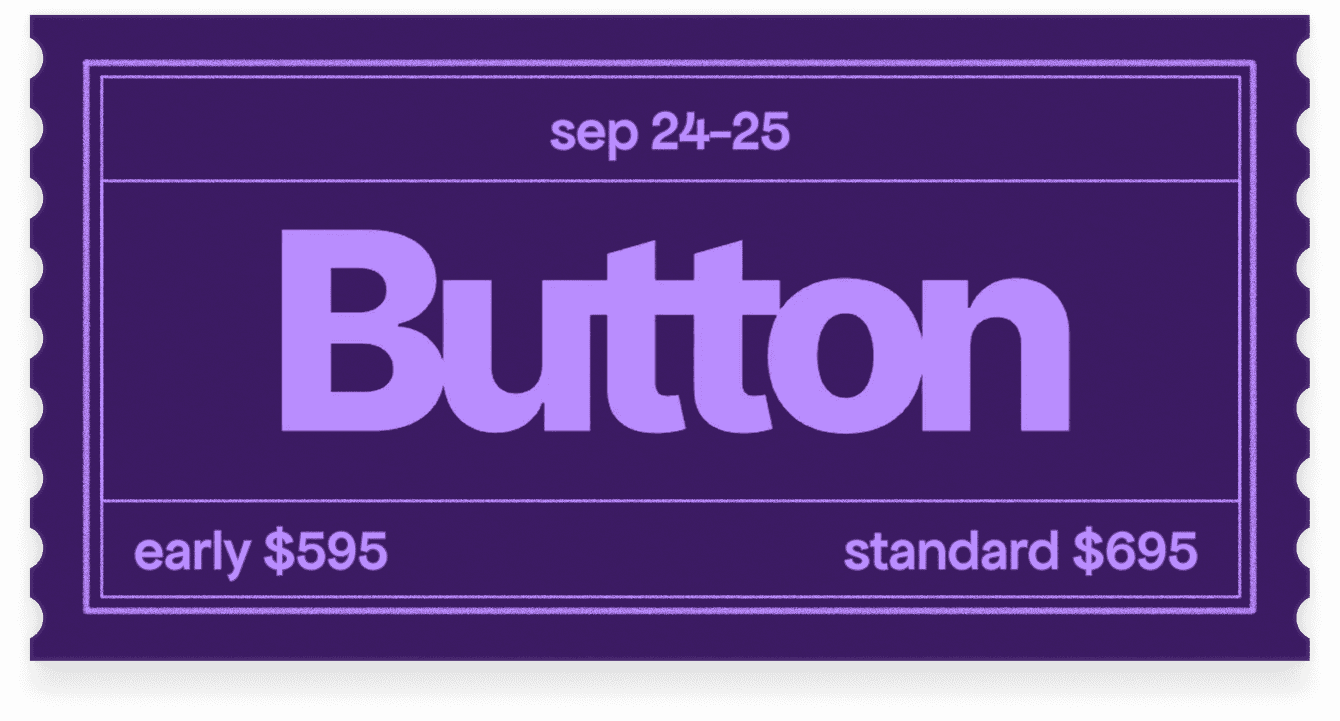

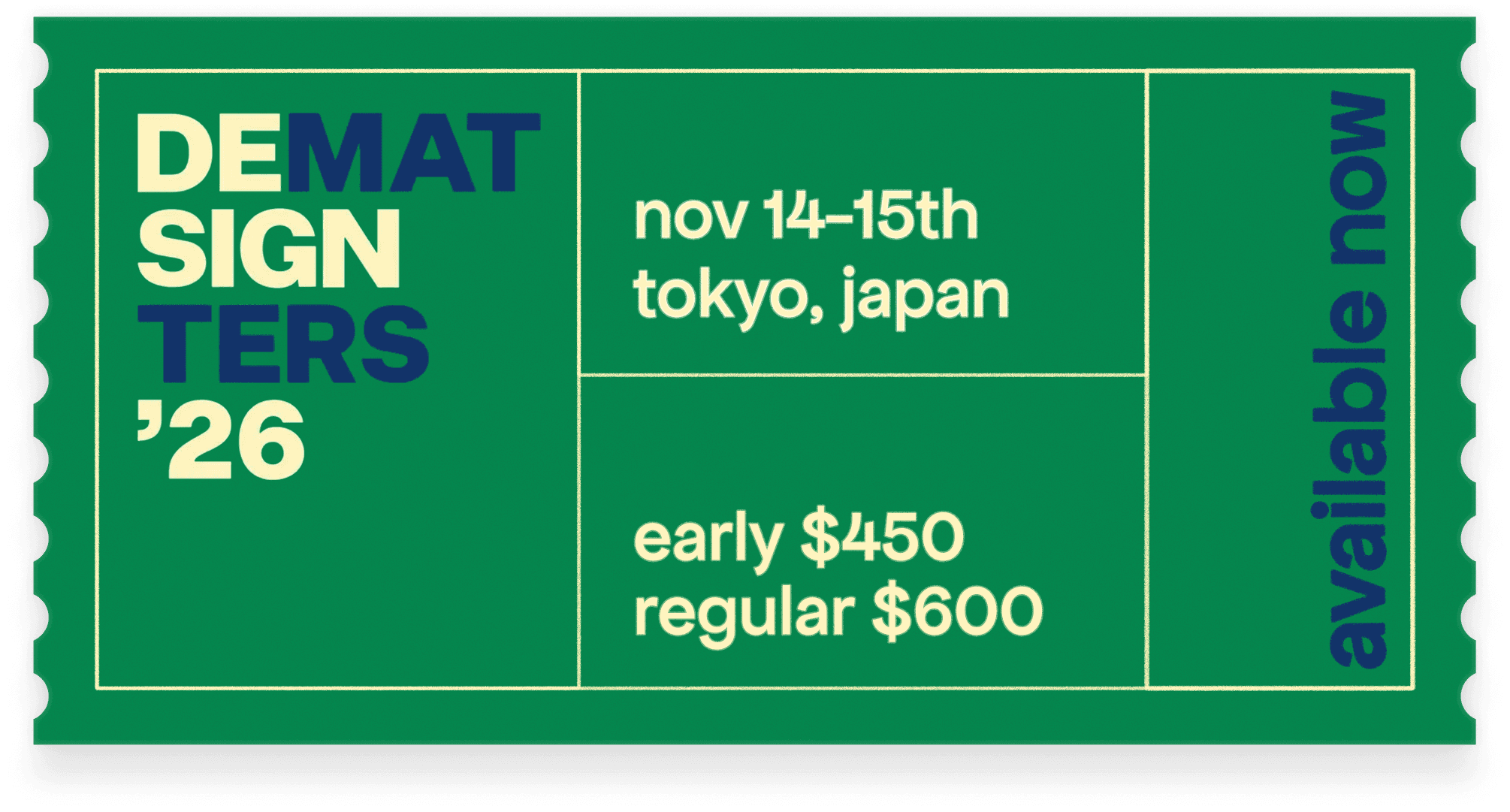

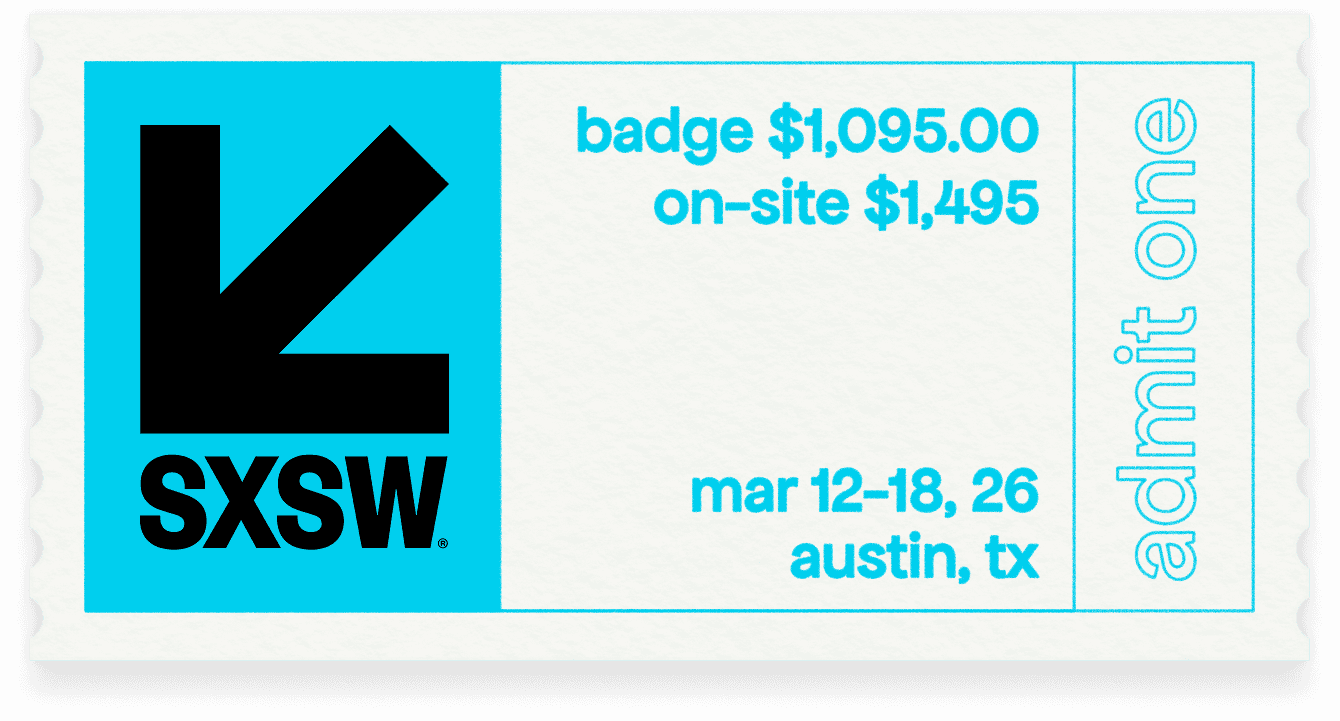

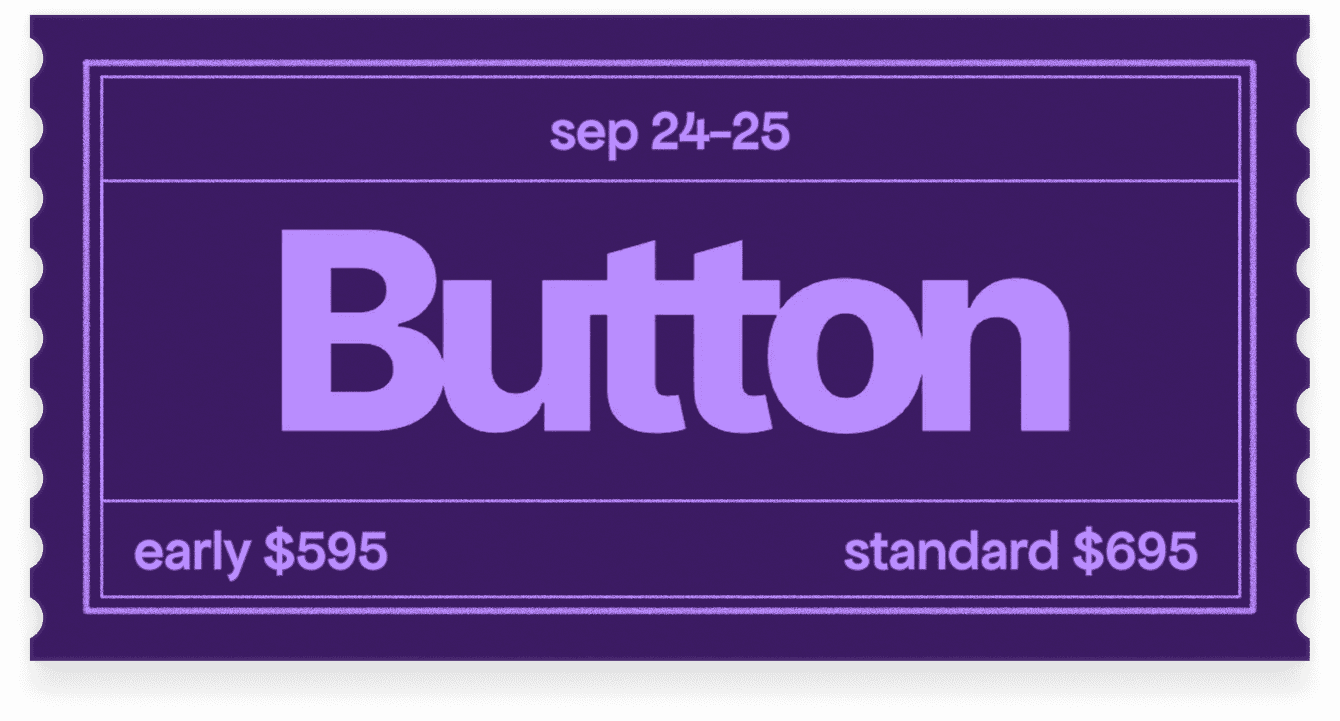

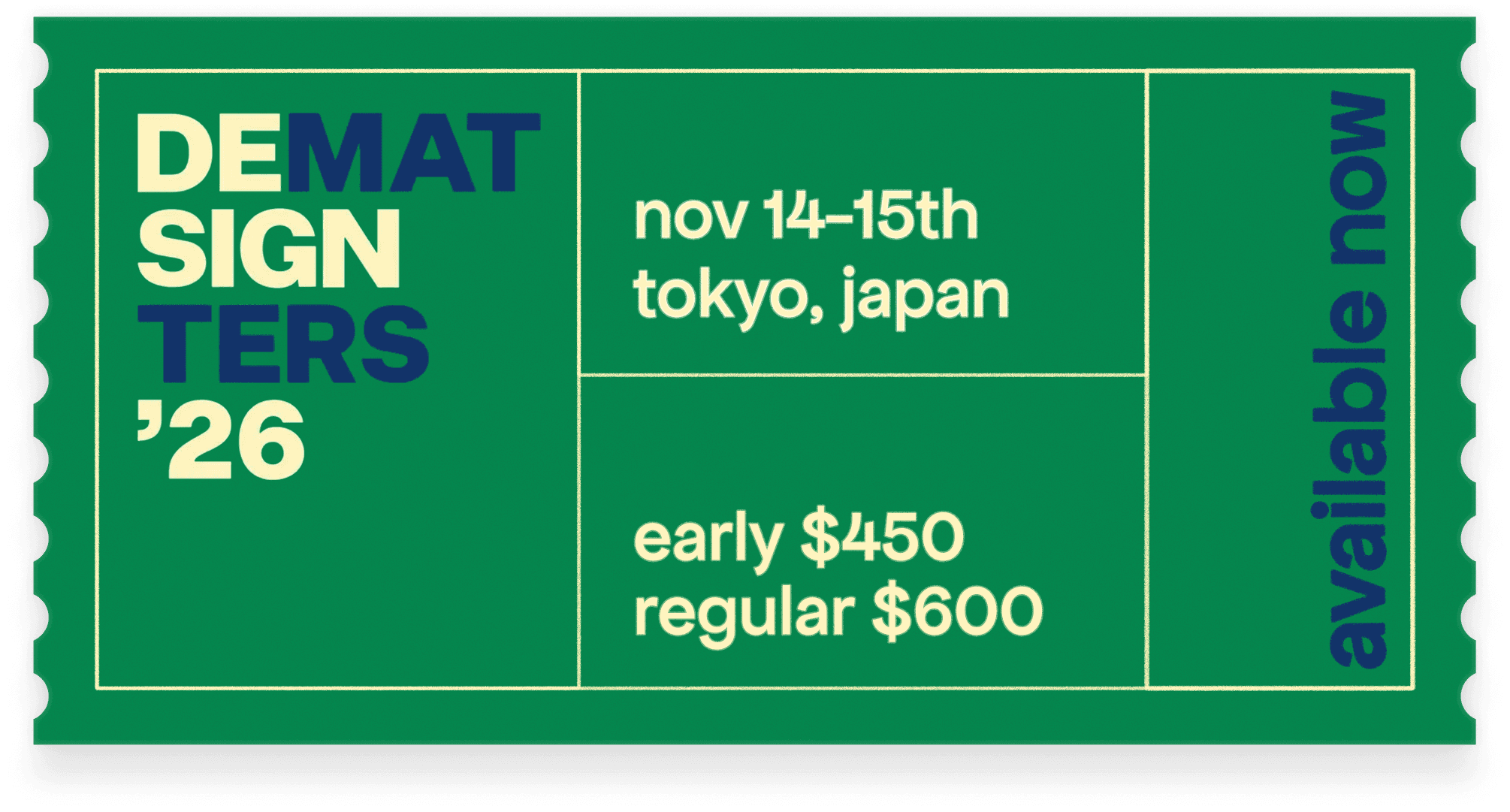

events and conferences

1

events and conferences

1

events and conferences

1

events and conferences

1

events and conferences

1

events and conferences